Purpose-built AI for regulated lending: where SLMs fit and why it matters

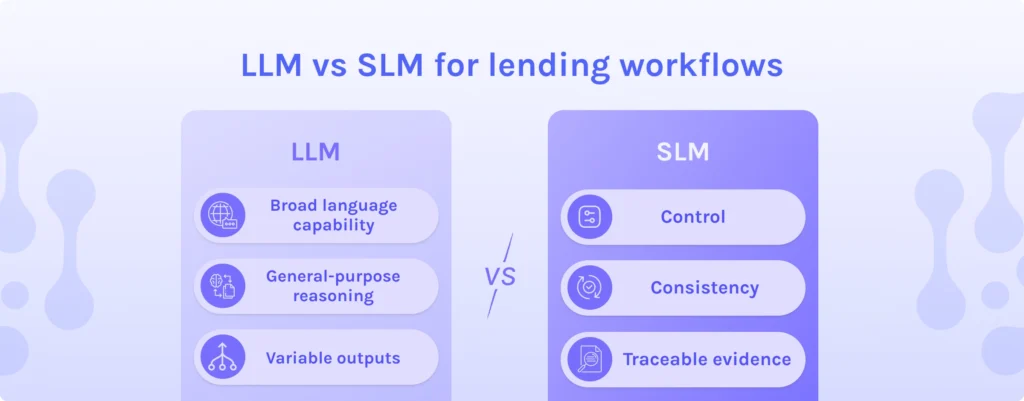

TL;DR: LLMs are powerful general-purpose models, but regulated lending workflows demand control, consistency and traceable evidence. Specialized language models can be a better fit for document-heavy underwriting because they focus on narrow tasks where accuracy and governance matter most. Ocrolus is an AI-powered workflow and data analytics platform that transforms messy documents and digital data into regulatory-grade decision intelligence so humans can make faster and more confident credit decisions.

Every lender is hearing it: “We’re LLM-powered,” or “We’re AI-powered.” Large language models have changed what software can do with language. But underwriting and funding operations are not judged by how fluent an output sounds. They are judged by whether their decisions are consistent, defensible and evidence-based.

That is why “bigger model” is not a shortcut to “better outcomes” in AI in lending. In regulated workflows, the best technology is the one you can govern, validate and explain. For many document-heavy steps in mortgage lending and small business lending, specialized language models are often the better fit, which is why lenders are increasingly scrutinizing what a real lending AI stack looks like.

Quick definitions: LLM vs SLM

Large language models (LLMs) are general-purpose AI systems trained on massive datasets across many domains. They are strong at broad language tasks like summarization, Q&A and drafting. The tradeoff is that their outputs can be variable and may sound confident even when they are wrong, which is a poor fit for high-stakes verification.

Specialized language models (SLMs) are smaller or domain-focused models built for a narrower scope. They are designed to deliver higher accuracy and tighter control for specific workflows, especially when the job is to verify, reconcile and standardize information rather than generate open-ended language.

If you are evaluating “SLM vs LLM,” the decision should not be ideological. It should be workflow-driven.

Why regulated lending rewards control over capability

Regulated lending automation typically requires three things that broad, general-purpose AI is not designed to guarantee:

- Consistency: identical inputs should produce identical outputs

- Controls: clear constraints on what the system can and cannot do

- Defensibility: traceable evidence that supports every conclusion

The primary risk is not a lack of capability. It is a confident, untraceable output. A model that produces a plausible narrative without a clear evidence path can create operational rework and governance risk.

In practice, this is why lenders are increasingly separating “language capability” from “decision support.” Many early automation efforts stall not because of model performance, but because teams overlook governance and review, a pattern reflected in common AI adoption pitfalls lenders get wrong about automation. Language can help with productivity. Decision support must stand up to review.

Where SLMs fit in AI in lending workflows

SLMs tend to excel in document-heavy tasks that sit in the critical path of loan origination and underwriting automation:

- Organizing and classifying incoming documents

- Extracting specific data points with high precision

- Normalizing information across formats and institutions

- Comparing extracted data against applications and policies

- Flagging discrepancies that require human review

These workflows are bounded and testable. Because of that, lenders often see better outcomes from fine-tuned AI models for financial processing than from broad, general-purpose systems. That matters because it enables tighter governance, allowing SLMs to be trained and evaluated on domain-specific patterns, monitored for drift and paired with deterministic rules that enforce consistent behavior.

This is also where “intelligent document processing” either becomes a commodity or becomes decision intelligence. Extraction alone is not the finish line. The value is in validation, context and discrepancy detection that reduces touches and prevents downstream conditions.

Ocrolus’ technical approach: purpose-built AI plus validation and human review

Ocrolus is an AI-powered workflow and data analytics platform that has used AI in regulated production environments for over 10 years, well before large language models became mainstream. Lenders use Ocrolus via APIs, a dashboard and major loan origination system integrations to streamline underwriting, reflecting a broader shift toward vertical AI built specifically for lending workflows rather than horizontal tools.

Ocrolus uses purpose-built AI, including specialized language models, to prepare, validate and analyze financial data at scale. The approach is designed for governed workflows:

- Identify and organize incoming documents by type and relevance

- Extract and validate key fields with domain-specific accuracy

- Apply deterministic rules that support consistent outcomes

- Flag inconsistencies with clear explanations for reviewers

- Provide traceable evidence that links findings back to source documents

The goal is verification over versatility: preparing trusted, decision-ready context that is traceable back to source evidence and ready for human review. Humans stay in control. Ocrolus supports decisions; it does not make them. Ocrolus prepares and explains the data. Humans make the decisions.

What to ask AI vendors

When a vendor leads with “LLM-powered,” ask questions that reveal whether the system is governed for regulated lending:

- Which tasks use which models and why?

- How is extracted data validated and tested against known outcomes?

- What triggers human review and how are thresholds set?

- How is evidence presented so that a reviewer can verify the result quickly?

- What is your approach to AI risk management and monitoring over time?

The best AI lending platforms are not defined by a single model. They are defined by verification, governance and evidence.

Key takeaways

- LLMs are general-purpose models, but regulated lending requires control, consistency and traceable evidence

- SLMs can be a better fit for document-heavy underwriting tasks where workflows are bounded and testable

- Decision intelligence goes beyond extraction to validate, reconcile and explain discrepancies with evidence

- Ocrolus pairs purpose-built AI with deterministic rules and human review so teams move faster with confidence

- Vendor evaluation should focus on governance, validation and evidence presentation, not hype