From re-underwriting to verification: building a trusted data layer in mortgage insurance

TL;DR: In mortgage insurance, verification efficiency determines operating margin. When verification expands into manual reconstruction of borrower data, costs rise and scalability stalls. It requires precise confirmation of income, assets and eligibility signals with audit-ready traceability. Many insurers rely on generic AI tools that classify documents but still require manual re-validation, creating a costly verification loop that can resemble re-underwriting behavior. A purpose-built, AI-powered workflow and data analytics platform that delivers a trusted, evidence-linked data layer enables mortgage insurers to reduce duplicated labor, improve consistency across reviewers and protect operating margins while maintaining enterprise-grade reliability.

In the mortgage insurance sector, the primary objective is verification, not discovery. Unlike mortgage originators who build a borrower’s credit case from the ground up, mortgage insurance teams confirm specific data points: income, assets, liabilities and eligibility signals.

In a market where pricing power is limited and operating margin matters most, the ability to verify those data points with speed and defensibility determines whether operations scale efficiently or absorb unnecessary cost.

When verification expands into re-underwriting behavior

Verification should focus on confirming key facts. But when outputs lack traceability or transparency, reviewers must reconstruct portions of the analysis to gain confidence in the file.

- If calculated income is not clearly tied to source documents, it must be rebuilt.

- If discrepancies are not flagged systematically, they must be reconciled manually.

- If policy logic is unclear, reviewers must re-evaluate eligibility criteria independently.

Over time, this behavior can begin to resemble re-underwriting in effort and time, even though the insurer’s role remains verification. What should be a targeted review becomes a broad validation exercise.

This dynamic reflects how teams respond when data lacks transparency.

The hidden burden of duplicated verification

The challenge is not the absence of digital documents. Mortgage insurance teams already operate in a document-rich environment. The challenge is decision variability.

When the extracted data lacks mortgage-specific context, results can differ from reviewer to reviewer. If an underwriter cannot immediately see the source evidence behind a calculated income figure, the safest course is manual recalculation.

In some environments, automation tools classify documents while separate review teams validate values and calculations. The result is duplicated labor, where verification effort approaches the time and complexity of a deeper underwriting review.

True operational impact occurs only when the data layer is robust enough to support verification without secondary manual reconstruction.

Identifying the AI wrapper problem

The market has no shortage of tools positioned as AI for lending. Many excel at document classification. Far fewer deliver decision-ready data.

When a solution labels documents but requires manual validation of every critical value, the workflow remains fundamentally unchanged. Reviewers still reconcile discrepancies, rebuild calculations and confirm policy alignment independently.

In those cases, verification does not scale. Efficiency gains flatten. Headcount grows in proportion to volume.

Mortgage insurance workflows are policy-heavy and exception-driven. Horizontal tools often struggle to encode the nuance required for consistent outcomes. A trusted data layer must go beyond classification. It must standardize key fields, link every value to source evidence and systematically surface discrepancies for resolution.

Shifting to a verticalized data layer

A trusted data layer transforms messy, unstructured documents into audit-ready, evidence-linked outputs, standardizes key fields, links each value to its source evidence and flags discrepancies for reviewer resolution. Instead of simply receiving a data point, an underwriter is provided with decision intelligence where every figure is traceable to its source in the loan file. This transparency enables a team to move from hours of manual review to mere minutes for high-level verification.

Vertical AI matters because mortgage insurance is not a generic document task. It involves complex edge cases, specific overlays and evolving compliance expectations. A platform purpose-built for this environment can handle the nuances of varied income streams and complex asset statements with the consistency that human reviewers may lack. By prioritizing decision quality and auditability, a trusted data layer reduces touch time while simultaneously lowering operational risk.

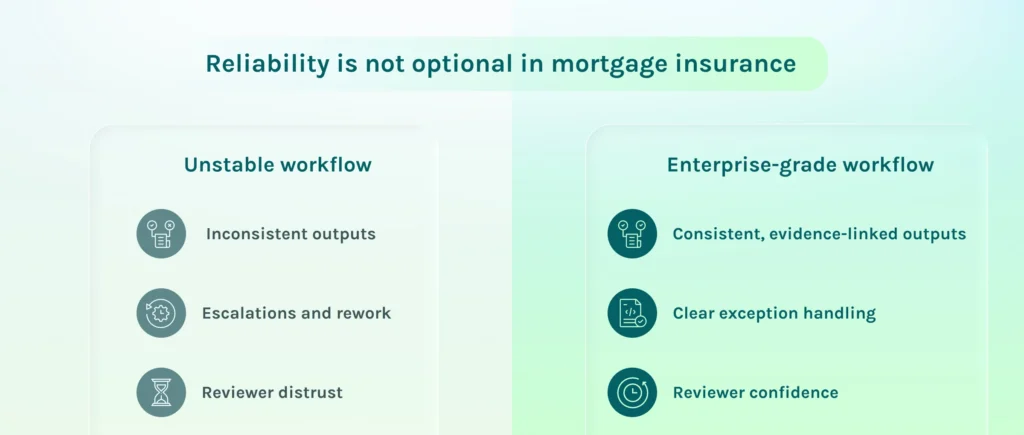

Enterprise-grade reliability as a product requirement

For mortgage insurance companies, stability is a prerequisite for technology adoption. These organizations operate in a regulated, capital-intensive environment where operational consistency and risk discipline are paramount. Any technology introduced into verification workflows must deliver predictable quality, clear exception handling and durable performance over time.

Mortgage insurance review cannot depend on tools that fluctuate in accuracy or require constant oversight. Enterprise-grade reliability means consistent outputs, transparent quality assurance and defensible audit trails that stand up under scrutiny.

Ocrolus delivers this through a combination of purpose-built AI, structured human-in-the-loop validation and universal calibration across best-in-class AI models. Universal calibration enables the platform to continuously fine-tune and benchmark multiple leading AI technologies against labeled financial data, sustaining high accuracy even as document formats and borrower complexity evolve.

This orchestrated approach avoids dependency on a single static model and supports regulatory-grade decision intelligence across complex, policy-heavy workflows. By providing a trusted data layer rather than just an automation tool, mortgage insurers can scale verification, protect operating margins and focus on high-level risk management instead of redundant data reconciliation.

Building the foundation for scalable mortgage insurance operations

The transition from re-underwriting to streamlined verification is the next evolution for mortgage insurance. By moving away from generic AI wrappers and toward a verticalized AI data layer, insurers can eliminate duplicated labor and establish a consistent, defensible workflow.

Key takeaways:

- Mortgage insurance requires precise verification of income, assets and liabilities rather than general document processing.

- Generic AI wrappers can cause verification to resemble re-underwriting when reviewers must manually validate every value.

- Verticalized AI-powered platforms can reduce review time from hours to minutes in well-scoped workflows.

- A trusted data layer is essential for managing policy-heavy, exception-driven mortgage insurance workflows.

- Scalability in mortgage insurance is achieved through audit-ready analytics and human-in-the-loop validation.